“In other words, the man who is born into existence deals first with language;

this is a given. He is even caught in it before his birth.”

(J. Lacan)

PS: Someone hasn’t understood that this is a dialog where I wrote all utterances (believe it or not) and asked me if I had trained an RNN. Well, no. This isn’t Artificial Intelligence. It’s about Artificial Intelligence.

G.B. – I’d like to start this discussion with a hot topic. Are you afraid of Artificial Intelligence?

J.L. – When interpreted as average daily messages, I’m afraid of human desires.

G.B. – Can you explain this concept in further detail?

J.L. – A desire is a strange thing. It’s bizarre, to be honest. It can be simple, but at the same time, it can hide something we cannot decode. I’m really afraid of all-powerful people because they can start thinking that they deserve a special jouissance (in English, think about terms like pleasure, enjoyment, possession).

G.B. – What’s so strange about it?

J.L. – The strange thing is something that Marx understood perfectly and that I’ve called plus-jouissance. Political power is a particular territory where a person can think to re-find his or her mother’s breast. In other words, I’m afraid when a person thinks that it’s possible to kill, impose absurd laws, and get richer and richer only because the majority of other people stay on the other side.

G.B – Is it enough to be afraid of Artificial Intelligence?

J.L. – It’s enough to be afraid of many things, but we must also trust human beings. Artificial Intelligence is becoming another object where the plus-jouissance can focus its attention. Sincerely, I wouldn’t say I like science fiction, and I don’t think about AI as another nuclear power. Maybe it’s only a bluff, hype to feed some marketing manager, but it seems that Artificial Intelligence had a weird behavior. It’s much less pragmatic than a hydrogen bomb but much more flexible.

G.B – Maybe I’m beginning to understand. When you say flexible, do you mean that it has more degrees of freedom?

J.L. – Degrees of freedom as attraction points.

G.B – A crazy politician could start a war even without knowing what AI is, am I correct?

J.L. – He knows what AI is perfectly. AI is a toy asking to become a womb for a new plus-jouissance.

G.B – Well, but let’s talk about the results. I want to be straight again: are you a reductionist?

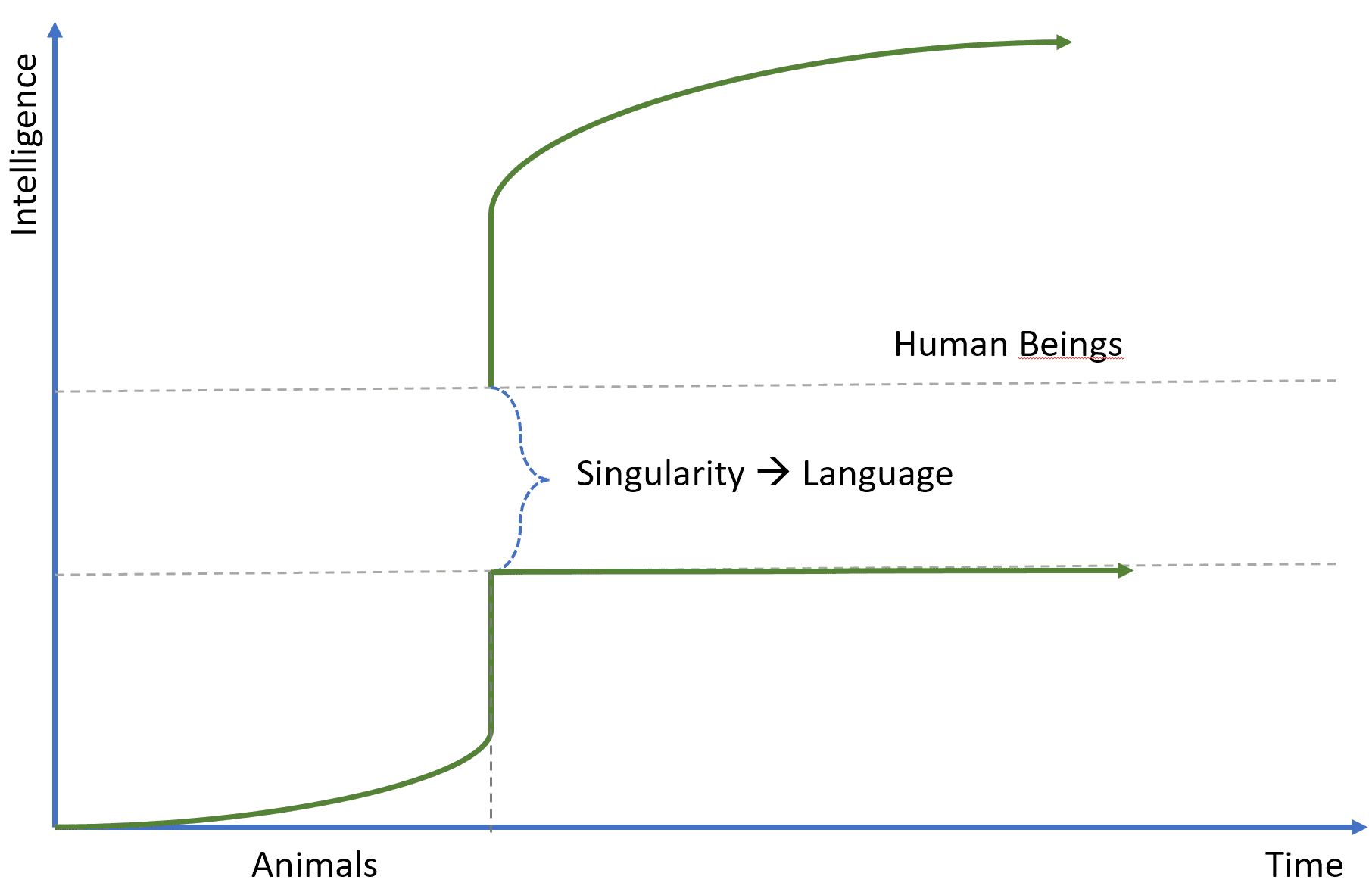

J.L. – Freud was a reductionist but failed to find all the evidence. Humans are animals, but quite different from dogs and monkeys. Look at this diagram:

We can suppose that until a particular instant, all animals were similar, and they developed their intelligent abilities according to the structure of their brain. However, there was an instant when human beings started developing the most crucial concept of history: language. It created a singularity, altering the smoothness of the evolution line.

G.B – Why is language so important?

J.L. – It’s dramatically vital because it broke the original relationship with the jouissance. Human beings began describing and representing the world using their language. This allowed me to solve many problems, asking more and more questions and finding more and more answers, but… They had to pay a price. If they were somehow complete before the singularity (this isn’t a scientific assumption), they found themselves with a missing part after that moment.

G.B – Which part?

J.L. – Impossible to say. The missing part is precisely what constantly escapes the signification process.

G.B – Well, but how can this explain the power of Artificial Intelligence?

J.L. – Probably it doesn’t, but, in my opinion, the best arena for AI is not a computational activity. It’s the language. Do you know the difference between moi and je?

G.B – Moi is the subject who talks, while je is the subject who desires. Correct?

J.L. – More or less. Until a certain point, it’s easy to reduce some brain processes to the functions of a moi. A well-developed, advanced chatbot could be considered a limited human being who always lived inside a miniature universe.

G.B – It’s not very hard to imagine a chat with such an artificial agent.

J.L. – Yes, it’s possible. But my question is: did it (or he, she?) enter the language world like any human being?

G.B – Are you wondering if it has lost the jouissance?

J.L. – Exactly. Now, I can seem mad, but can a chatbot desire? Does a chatbot je exist?

G.B – This is probably irrelevant for AI developments. Or are you asking me if there will be psychoanalysts for chatbots?

J.L. – It’s irrelevant because your definition of intelligence is based on a rigid schema, mainly derived from the utility theory. Your agents must be rational and intelligent if they can adapt their behavior to different environmental changes to maximize the current and future advantages.

G.B – Reinforcement learning.

J.L. – Do you think a reinforcement learning algorithm governs all human processes? Imagine two agents closed into a rectangular universe. There are enough resources to make them live for a very long time. What’s the most rational behavior?

G.B – If we don’t consider the game theory and assume that both agents know precisely the same things, the most rational strategy is dividing the resources. To consume a unit only after the other agents have done the same.

J.L. – Do human beings act this way?

G.B – To be honest… No, they often don’t.

J.L. – And what about love? Hate? Are they explainable using reinforcement learning? If the purpose is reproduction, humans should copulate like dogs or rabbits. Don’t you think?

G.B – Indeed, this is a very delicate problem. Many researchers prefer to avoid it. Someone else says that emotions are the effect of chemical reactions. On the other side, if I drink a glass of whiskey, I feel different and uninhibited. It’s impossible not to say that also emotions are driven by chemical processes.

J.L. – Probably they are, but I don’t care. No one cares. When you fall in love with a woman, are you asking yourself which combination of neurotransmitters determined that event? I think I will be more software-oriented than you! If a message appears on a monitor, it’s evident that some electronic circuits made it happen, but this answer doesn’t satisfy you. Am I correct?

G.B – I’d like to know which software determined the initial cause.

J.L. – Exactly. Returning to our chatbots, I want to be more challenging (and maybe annoying): do they have a conscience?

G.B – I don’t know.

J.L. – Do they have a subconscious?

G.B – Why should they have one?

J.L. – Oh my God! Because you’re talking about artificial intelligence comparing your agents to human beings! Maybe it’d be easier if you took a rat as a benchmark!

G.B – Rats are an excellent benchmark for studying reinforcement learning. Have you ever heard about rats in a maze?

J.L. – Well, this is something I can accept. But you’re lowering your aim! I read all the news about Facebook and its chatbots that invented a language.

G.B – Hype. They were unable to communicate in English. But too many people thought to be in front of a Terminator scenario.

J.L. – That’s why someone can be afraid of AI! You are confirming what I said at the beginning of this dialogue. A nuclear bomb explodes and kills a million people. Stop. Everything is clear. If you’re the leader of a big nation, you could be interested in nuclear weapons because they are “useful” in some situations. But, at the same time, it’s easier to oppose such a decision. With AI, the situation is entirely different because sometimes there’s a lack of scientificity.

G.B – You complained about this also for the psychoanalysis. For this reason, you introduced the matheme notation.

J.L. – The beauty of mathematics is its objectivity, and I hope that the most important philosophical concepts regarding AI will one day be formalized.

G.B – I think it’s easier to formalize an algorithm.

J.L. – Of course, it is. But an algorithm for the subconscious must be interpreted entirely differently; sometimes, it can be impossible! That’s why I keep saying that the best arena for artificial intelligence is a linguistic world. I want to finish with a quote from one my seminars: “Love is giving something you don’t have to someone who doesn’t want it“. I’m still waiting for a rational artificial agent that struggles to give something it doesn’t have to another agent that refuses it! Can reinforcement learning explain it?

G.B – Just silence